Lucinity featured in Risk.net

"AI and machine learning tend to be better than humans at convergent thinking. Humans, on the other hand, tend to excel in divergent thinking – the ability to come up with new solutions, underlined by a greater sense of nuance."

In a recent article, Natacha Maurin from Risk.net interviews several financial crime experts about the bunq vs. DNB case in the Netherlands. Lucinity’s Financial Crime SME, Francisco Mainez, shares his thoughts on the importance of leveraging AI and humans where their strengths lie. Explainability is also at the heart of ensuring that AML software best serves compliance teams and regulators.

How AI became respectable for AML controls

The ruling in favor of bunq using AI for anti-money laundering (AML) controls is a significant development for the financial industry. It shows that AI-based AML systems can effectively detect suspicious transactions and comply with regulations and that regulators need to consider the benefits and limitations of such systems when evaluating banks' compliance with AML rules.

However, the ruling also highlights the importance of human oversight and critical thinking in AML compliance, such as identifying politically exposed persons (PEPs) and sources of funds. These tasks require judgment and analysis that may not be achievable purely through AI, and thus require AML systems to be user-friendly and augment the brilliance of compliance teams.

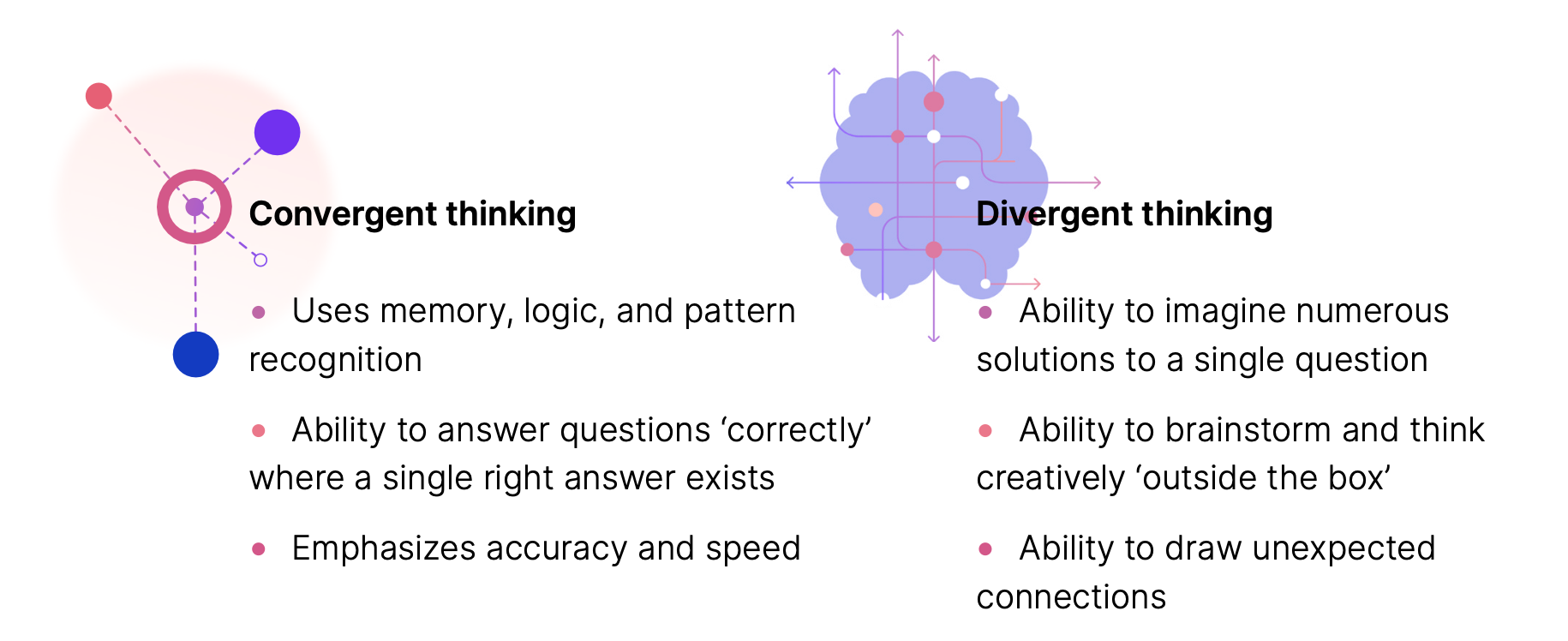

Convergent vs. Divergent thinking

In this article, Francisco Mainez highlights the difference between convergent and divergent thinking and where AI and humans best fit in the picture of AML processes.

He explains that AI and machine learning are typically more efficient than humans in "convergent thinking," which involves following a logical sequence of steps to solve a problem. This is because computers and AI have the advantage of more significant memory and data processing, making it easier for them to learn rules and apply them to problem-solving. Conversely, humans are generally better at "divergent thinking," which involves generating new and innovative solutions, often with a nuanced perspective.

In Lucinity’s White Paper, Humans <3 Intelligence, Lucinity expands further on combining artificial and human intelligence.

Francisco expands that the involvement of humans is crucial in cases where macro events cause changes that AI may not have the context to understand. For example, when there is a change in regulations, humans need to intervene since each alteration in the law entails complex decisions on how to modify existing systems to comply with policy requirements. Francisco points out that divergent thinking is necessary for making these decisions, which is better suited to humans.

AI and Explainability

Overall, the bunq case highlights the potential of AI to enhance AML controls and improve the efficiency and accuracy of AML compliance. However, it is also important to ensure that AML software uses AI in a way that is explainable. The article highlights:

Explainability remains high on regulators’ list of concerns with the technology, highlighted by the European Banking Authority in a 2020 report on big data and advanced analytics.

Although there are various methods to demonstrate explainability, experts assert that the fundamental challenge is to present the AI's decision-making process in a manner that translates machine reasoning into human logic. For example, explainability is at the heart of Lucinity’s Actor Intelligence which brings customer behavior to life through visualizations that tell a story and present a contextual narrative.

Read the full article here: