The Role of Gen AI in Financial Scams: Balancing Innovation and Security

Explore the role of Gen AI in financial scams, its advanced fraud detection capabilities, and the necessary security measures to prevent misuse.

Financial scams have become increasingly sophisticated in recent years, using advanced technologies to deceive individuals and organizations. U.S. consumers reported losing over $8.8 billion to fraud in 2022 - a 30% increase from the previous year, and the trend continues to rise. This concerning rise in scams signals the need for more advanced solutions that can effectively deal with increasingly complex financial scams.

Generative AI (Gen AI), a subset of artificial intelligence that can analyze and create original content, becomes relevant here by offering improved fraud detection and prevention capabilities compared to traditional methods. However, it can also be misused to facilitate scams and lead to new vulnerabilities, making new security measures and regulations essential.

This article discusses the positive role of Gen AI in financial scams and also security measures to prevent its misuse, aiming to help achieve a stable balance between AI innovation and safety.

Generative AI has the potential to significantly enhance fraud detection and prevention by generating new data and learning from existing datasets. This ability allows it to identify patterns and anomalies that traditional methods may overlook. Here are the key underlying technologies that make generative AI a powerful tool in combating financial scams:

Autoencoders

Autoencoders are neural networks that can learn to compress your data into a lower-dimensional representation and then reconstruct it back to its original form. This capability is essential for identifying anomalies in large datasets. For instance, an autoencoder trained on normal transaction data will have difficulty reconstructing fraudulent transactions, thereby flagging them for further investigation.

Large Language Models (LLMs)

LLMs, such as OpenAI's GPT-4, can process and understand vast amounts of textual data. In the context of financial fraud, LLMs can analyze customer communications, transaction descriptions, and other textual data to detect suspicious patterns. For example, LLMs can be trained to recognize the language used in phishing emails or to identify inconsistencies in customer service interactions that may indicate fraud.

Generative AI can also simplify the explanations for flagged transactions using LLMs. By generating clear and comprehensive insights in natural language, it helps investigators and decision-makers understand why a particular transaction was flagged, thereby improving transparency and trust in the fraud detection system.

Generative Adversarial Networks (GANs)

GANs include two neural networks. The generator creates synthetic data and the discriminator network evaluates its authenticity. In fraud detection, GANs can generate synthetic transaction data that mimics real-world patterns. This synthetic data can be used to train machine learning models without exposing sensitive customer information, thereby enhancing privacy and security.

Generative AI's ability to learn from both legal and illegal transactions enables it to identify complex fraud patterns that traditional methods might miss. By continuously updating its models with new data, generative AI remains adaptable to evolving fraud tactics, making it an important tool for financial institutions aiming to stay ahead of fraudsters.

Generative AI's capabilities extend into various practical applications that enhance fraud detection and prevention. These applications leverage the unique strengths of generative models to provide more accurate, efficient, and scalable solutions for financial institutions.

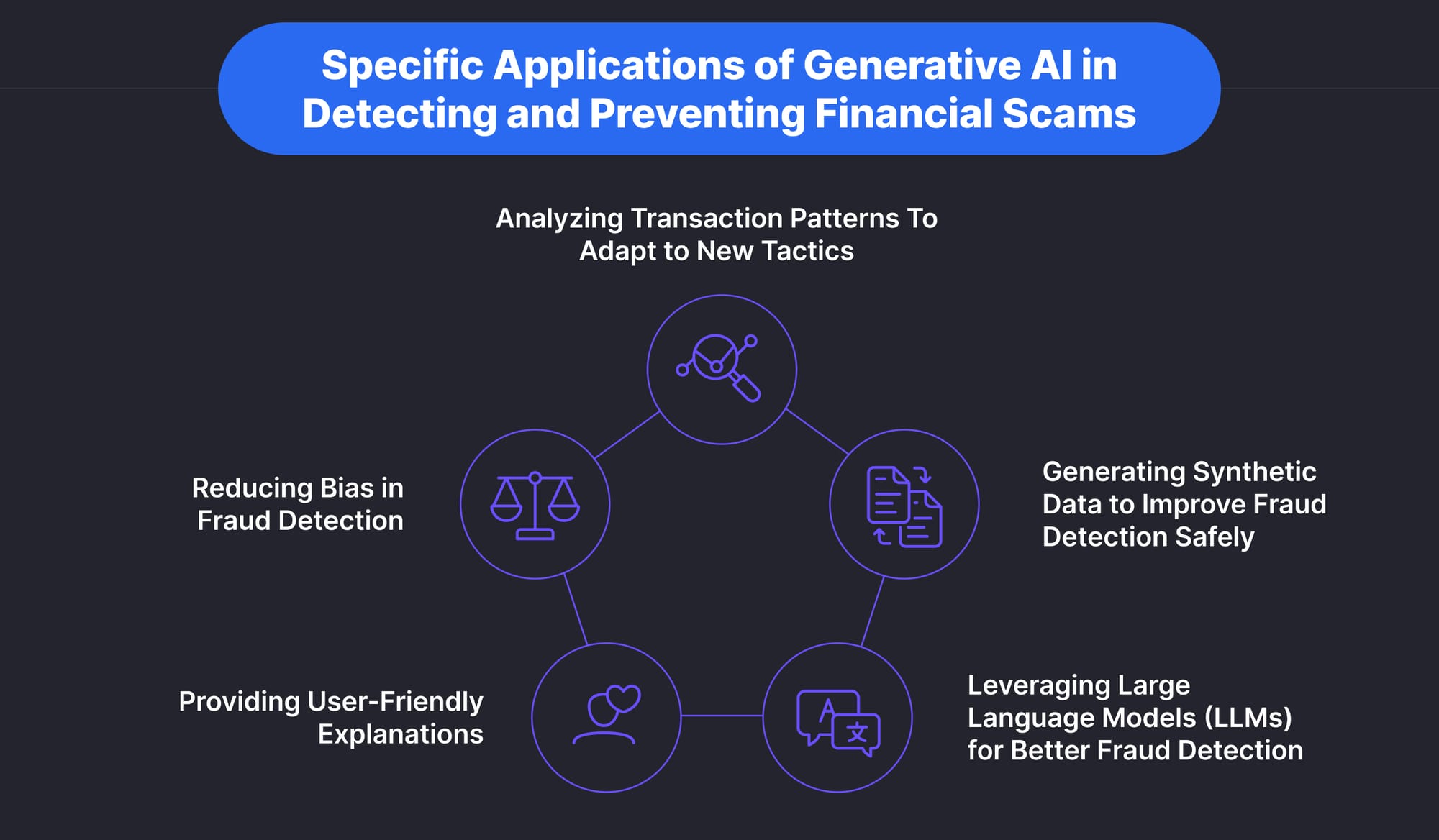

Analyzing Transaction Patterns To Adapt to New Tactics

Generative AI can analyze vast amounts of transaction data to identify unusual patterns that may indicate fraud. For example, it can detect anomalies such as sudden spikes in transaction amounts or unusual transaction locations that deviate from a customer’s typical behavior. By continuously learning from new transaction data, generative AI models can adapt to new fraud techniques as they emerge.

Generating Synthetic Data to Improve Fraud Detection Safely

GANs are particularly effective at generating synthetic data that closely resembles real transaction data. This synthetic data can be used to train machine learning models for fraud detection without exposing sensitive customer information. Synthetic datasets ensure privacy while providing rich training material, which enhances the models' ability to detect sophisticated fraud schemes.

By creating a dataset of synthetic fraudulent transactions, financial institutions can train AI models to recognize fraud patterns. This approach helps in detecting new types of fraud that might not be present in the original training data. Synthetic data can also be used to simulate various fraud scenarios, ensuring that the models are robust and capable of handling a wide range of fraudulent activities.

Leveraging Large Language Models (LLMs) for Better Fraud Detection

LLMs can process and understand unstructured data, such as customer communications and feedback, to identify inconsistencies or signs of distress that may indicate fraud. They can also analyze contextual information and provide detailed inferences, providing a more holistic view of potential fraudulent activities.

Providing User-Friendly Explanations

Generative AI can simplify the explanations for why certain transactions are flagged as fraudulent. It can produce detailed summaries and visualizations that highlight the key factors leading to the detection of fraud.

Generative AI also provides clear and understandable explanations to enhance transparency, support quick action, and build trust among stakeholders. This makes it easier for investigators, decision-makers, and even customers to understand the reasoning behind the fraud detection system’s decisions.

Reducing Bias in Fraud Detection

Using Gen AI in financial scam prevention can help reduce bias in fraud detection models by creating balanced datasets that accurately represent all customer profiles and transaction types. This ensures that the models do not unfairly target specific groups of customers and maintain a high level of accuracy across diverse scenarios.

By generating synthetic data that includes a wide variety of transaction types and customer behaviors, generative AI ensures that the training datasets are balanced and representative. Models trained on unbiased data are more likely to provide fair and equitable fraud detection, reducing the risk of discriminatory practices.

These applications demonstrate how generative AI can be integrated into various aspects of fraud detection and prevention, providing more effective and scalable solutions for financial institutions.

AI innovations bring several benefits, including advanced protection against financial scams. However, they also come with new vulnerabilities and possibilities of misuse, which may lead to an increase in fraud. As a result, it is important to find the balance between AI innovations for security and security measures or regulations to minimize AI misuse and vulnerabilities. Let’s understand this in more detail by considering the risks and countermeasures linked to wrong use and vulnerabilities respectively.

Misuse of Generative AI to Facilitate Financial Scams and Countermeasures

While generative AI has a large potential for spotting and preventing fraud, it also presents new opportunities for fraudsters. Criminals can misuse AI to create sophisticated scams, making it imperative for regulatory bodies and technology providers to develop effective countermeasures. Let’s look at some cases of misuse of Gen AI in financial scams with examples-

Phishing and Social Engineering

Generative AI can create highly convincing phishing emails and messages that mimic legitimate communications, making it difficult for recipients to detect fraud.

- Example: AI-generated emails that replicate the style and tone of company executives to trick employees into transferring funds or disclosing sensitive information.

Deepfakes and Identity Fraud

AI technologies can generate realistic videos and audio recordings that impersonate individuals, facilitating identity theft and unauthorized transactions.

- Example: Deepfake videos of executives authorizing large financial transactions, leading to significant financial losses.

Synthetic Identity Fraud

AI-generated synthetic identities combine real and fake information to create new identities that can pass verification checks.

- Example: Fraudsters use synthetic identities to open bank accounts, secure loans, and commit financial fraud.

Automated Attack Tools

Tools like FraudGPT on the dark web provide fraudsters with easy access to AI-generated content and strategies for executing scams.

- Example: Scripts and guides for creating phishing campaigns, generating fake IDs, and conducting social engineering attacks.

Countermeasures

To combat the misuse of Gen AI in financial scams, a combination of regulatory actions and advanced technologies is necessary:

Regulatory Frameworks

This involves establishing standards and guidelines for the ethical use of AI in financial services. You must also implement stringent compliance requirements for AI deployment, including regular audits and transparency in AI systems.

Advanced AI Technologies

Another impactful approach is to use AI itself to detect AI-diven scams. Use advanced AI models to detect anomalies and suspicious patterns that may indicate fraudulent activities. Ensure that AI systems provide clear explanations for their decisions, helping to identify and mitigate misuse.

Continuous Monitoring and Updating

Implement real-time monitoring systems to detect and respond to emerging threats quickly. Also, keep updating AI models with new data and security patches to maintain resilience against evolving fraud tactics.

Collaboration and Information Sharing

You must encourage collaboration between financial institutions, technology providers, and regulatory bodies to share information and best practices. Leveraging threat intelligence platforms will also help you stay informed about new and emerging fraud techniques.

GenAI Vulnerabilities and How to Ensure Security

Generative AI systems, like all AI technologies, are susceptible to various types of attacks that can compromise their effectiveness and integrity. Key vulnerabilities include:

Adversarial Attacks

Adversarial attacks involve manipulating the input data in order to deceive AI models into making false predictions. For instance, slight alterations to transaction data can cause an AI system to misclassify or overlook fraudulent activity.

- Mitigation: Implementing robust data validation and anomaly detection mechanisms can help identify and counteract adversarial inputs.

Data Poisoning

Data poisoning occurs when malicious data is injected into the training dataset, corrupting the model’s learning process and leading to inaccurate or biased outputs.

- Mitigation: Ensuring the integrity and quality of training data through secure data collection methods and regular auditing is essential to mitigate this risk.

Model Inversion

Model inversion attacks enable attackers to reconstruct input data from the model’s outputs, posing significant privacy risks.

- Mitigation: Techniques such as differential privacy can be implemented to protect sensitive information from being exposed.

Data Bias and Lack of Transparency

Biases in AI can result from using non-representative datasets, leading to unjust and unequal treatment of different groups. This challenge highlights the need for a conscientious approach to AI development, where potential biases are identified and addressed proactively.

- Mitigation: Using diverse and representative datasets and applying fairness constraints during training helps reduce biases. Most importantly, continuous monitoring of AI outputs for biased behavior allows for necessary adjustments to be made to the models.

Additionally, developing explainable AI models that provide insights into their decision-making processes helps identify and address potential biases. Transparency in AI systems builds trust among stakeholders and ensures accountability in automated decisions.

To effectively balance AI innovation with security risks, financial institutions can also adopt some best practices in their regular operations. This involves practices such as-

Securing Training Data

- Data Anonymization: Ensuring that training data is anonymized can prevent the exposure of sensitive customer information.

- Secure Storage and Access: Using secure methods for data collection and storage, and implementing access controls, helps protect the data from unauthorized access and manipulation.

- Regular Audits: Conducting regular audits of datasets can help detect and rectify any data integrity issues.

Protecting AI Models

- Model Encryption: Encrypting AI models can safeguard them from tampering and theft.

- Access Control: Implementing robust authentication mechanisms ensures that only authorized personnel can access and modify AI models.

- Security Audits: Regular security audits of AI models can identify potential vulnerabilities and ensure compliance with security standards.

Monitoring and Updating AI Systems

- Anomaly Detection: Continuous monitoring of AI systems for anomalies can help detect emerging threats and unusual patterns indicative of fraud.

- Regular Updates: Updating AI models with new data and security patches is essential to maintain their resilience against new attack vectors.

- Human Oversight: Integrating human oversight into AI workflows ensures that AI decisions are validated and contextualized by human expertise.

Comprehensive Risk Assessment

Conduct thorough risk assessments to identify and address potential vulnerabilities in AI systems. Evaluate the security of data sources, model integrity, and the overall deployment environment.

Cross-Disciplinary Collaboration

Foster collaboration between AI developers, cybersecurity experts, and industry regulators to develop robust security protocols. Address the unique challenges of AI systems through a collaborative approach.

Regular Security Audits

Implement regular security audits and penetration testing to identify and rectify vulnerabilities. Maintain the integrity and security of AI applications through proactive measures.

Employee Training and Awareness

Educate employees about the potential risks and best practices associated with AI systems. Focus on recognizing and understanding security threats and using AI solutions effectively in secure frameworks.

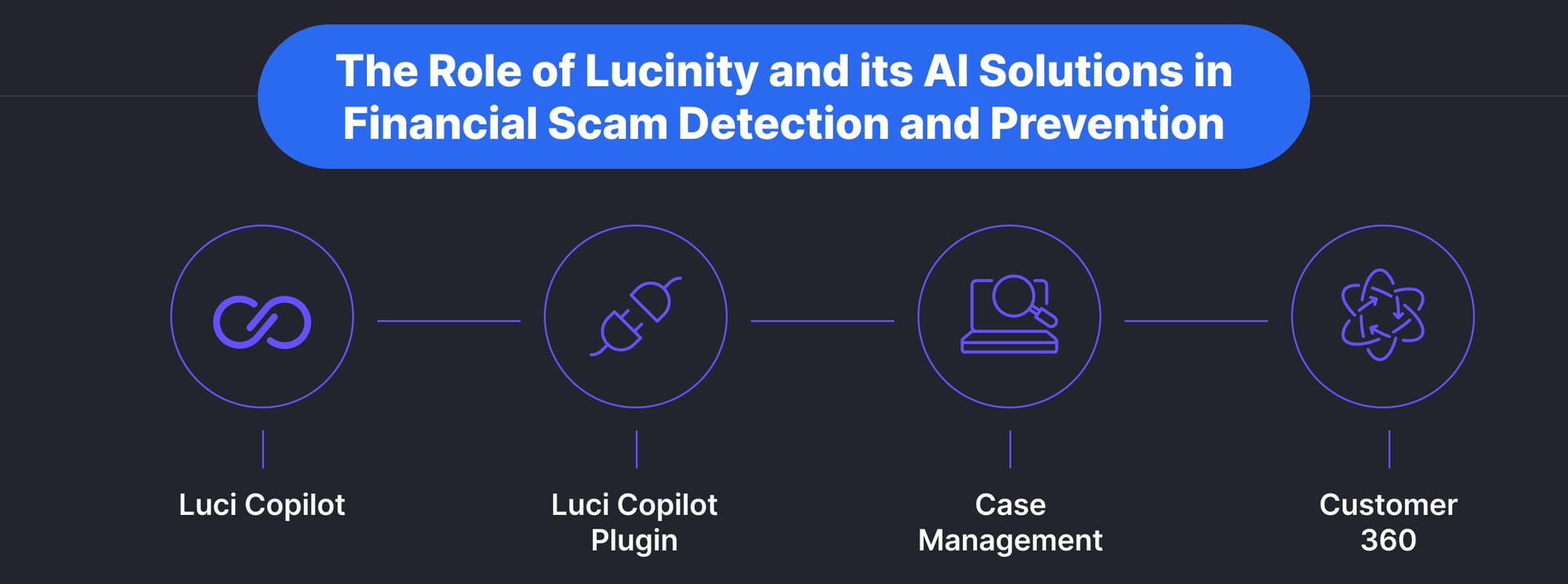

Lucinity is a leader in innovating generative AI solutions to counter financial scams while maintaining robust safety measures. The company provides a comprehensive SaaS platform equipped with advanced AI tools designed to enhance the capabilities of financial institutions in detecting and preventing fraud. Moreover, the platform is based on a secure Microsoft Azure framework with specialized security measures. Here’s how Lucinity’s AI innovations stand out in efforts against financial scams:

Luci Copilot

Luci, Lucinity’s generative AI-powered copilot, simplifies the complexities of financial crime investigations by transforming intricate financial data into clear, actionable insights, significantly reducing investigation times. It is a key part of Lucinity’s overall case management solution. Key functionalities of Luci include:

- Case Summarization: Provides summaries of financial crime cases, highlighting key risk indicators and offering concise overviews to speed up decision-making.

- Business Validation: Cross-references declared business information with online presence and official records to validate legitimacy, reducing the risk of fraudulent entities.

- Adverse Media and Negative News Searches: Conducts detailed searches for negative news or adverse media related to entities involved in transactions, providing comprehensive background checks.

- Money Flow Visualization: Creates visual representations of transaction flows, showing incoming and outgoing transactions to help investigators trace the movement of funds and spot suspicious activities.

Luci Copilot Plugin

The Luci Copilot Plugin integrates generative AI capabilities into any web-based tech stack, enhancing existing fraud prevention and compliance systems without high costs or long implementation times. Key features include:

- Seamless Integration: Integrates effortlessly with all web-based enterprise applications, delivering instant ROI and boosting productivity by up to 90%.

- Flexible and Configurable: Features can be customized to fit specific business needs through a no-code, drag-and-drop interface, providing maximum configurability and control.

- Rapid Deployment: This can be implemented quickly, often within days, reducing time to market and rapidly enhancing fraud detection capabilities.

Case Management

Lucinity’s case management platform integrates seamlessly with existing systems, unifying disparate sources of data into a single coherent platform. This streamlines the alert, investigation, and reporting processes, boosting efficiency and accuracy in fraud detection. Key aspects include:

- Unified Data Platform: Consolidates data from various sources, reducing the complexity of managing multiple systems.

- Streamlined Workflows: Automates compliance workflows, enhancing productivity by eliminating manual tasks.

- Customizable Alerts and Reports: Offers customizable alert settings and report generation capabilities, improving relevance and effectiveness.

Customer 360

Lucinity’s Customer 360 feature integrates data from multiple sources to provide a holistic analysis of customer behavior, which is essential for understanding the full context of customer activities and identifying potential risks. Key functions include:

- Comprehensive Data Integration: Aggregates data from KYC records, transaction histories, and external datasets, providing a detailed profile of each customer.

- Dynamic Risk Scoring: Continuously updates customer risk scores based on new data, improving fraud detection accuracy.

Other tools include the transaction monitoring system, regulatory reporting tool, and SAR manager which together ensure compliance while reducing the involved time and effort. Lucinity’s suite of tools exemplifies how generative AI can be used to enhance fraud detection and prevention in the financial sector.

Generative AI offers a large potential for enhancing fraud detection and prevention in the financial sector. By leveraging advanced technologies such as autoencoders, large language models, and generative adversarial networks, financial institutions can significantly improve their ability to detect and prevent complex fraud schemes. Here are some important takeaways-

- Enhanced Fraud Detection Capabilities: Generative AI models can analyze vast amounts of data and identify sophisticated fraud patterns more accurately than traditional methods.

- Reduction in Investigation Times: Tools like Luci Copilot and its plugin streamline the investigation process, reducing the time required to analyze and respond to potential fraud from hours to minutes.

- Improved Data Privacy and Security: By generating synthetic data that mimics real-world transactions, generative AI enhances data privacy and security, mitigating compliance hurdles and reducing the risk of data breaches.

- Balanced and Unbiased Fraud Detection: Generative AI can create balanced datasets that represent diverse customer profiles, helping to reduce biases in fraud detection models and ensuring fair treatment of all customers.

While the benefits of generative AI in financial scam investigations are substantial, it is equally important to address the security risks associated with its use. Implementing comprehensive security measures, such as securing training data, protecting AI models, and continuous monitoring, is key to mitigating vulnerabilities and misuse of Gen AI in financial scams. Additionally, regulatory frameworks and collaboration between industry stakeholders play an important role in ensuring the ethical and secure deployment of AI technologies.

To learn more about how Lucinity's AI solutions can help your organization enhance its fraud investigation and prevention capabilities, visit Lucinity.